You do not have a performance problem. You have a measurement problem. If every run starts from a different place, with random AI decisions, random camera movement, and random CPU frequencies, your team will burn days "optimizing" noise.

A consistent profiling scenario fixes that. Build one standalone worst-case scene, make it realistic enough to matter, and strip out the chaos that keeps lying to you. No more manual menu clicking. No more replaying half the game just to reach the hotspot. No more guessing whether the last change really moved the needle.

Start here: pick one scene or one deterministic route that is bad enough to matter, then lock it down. Fix the camera transform or move it through a spline. Remove random behavior or force the same seed. If the cost changes every run, your benchmark is broken.

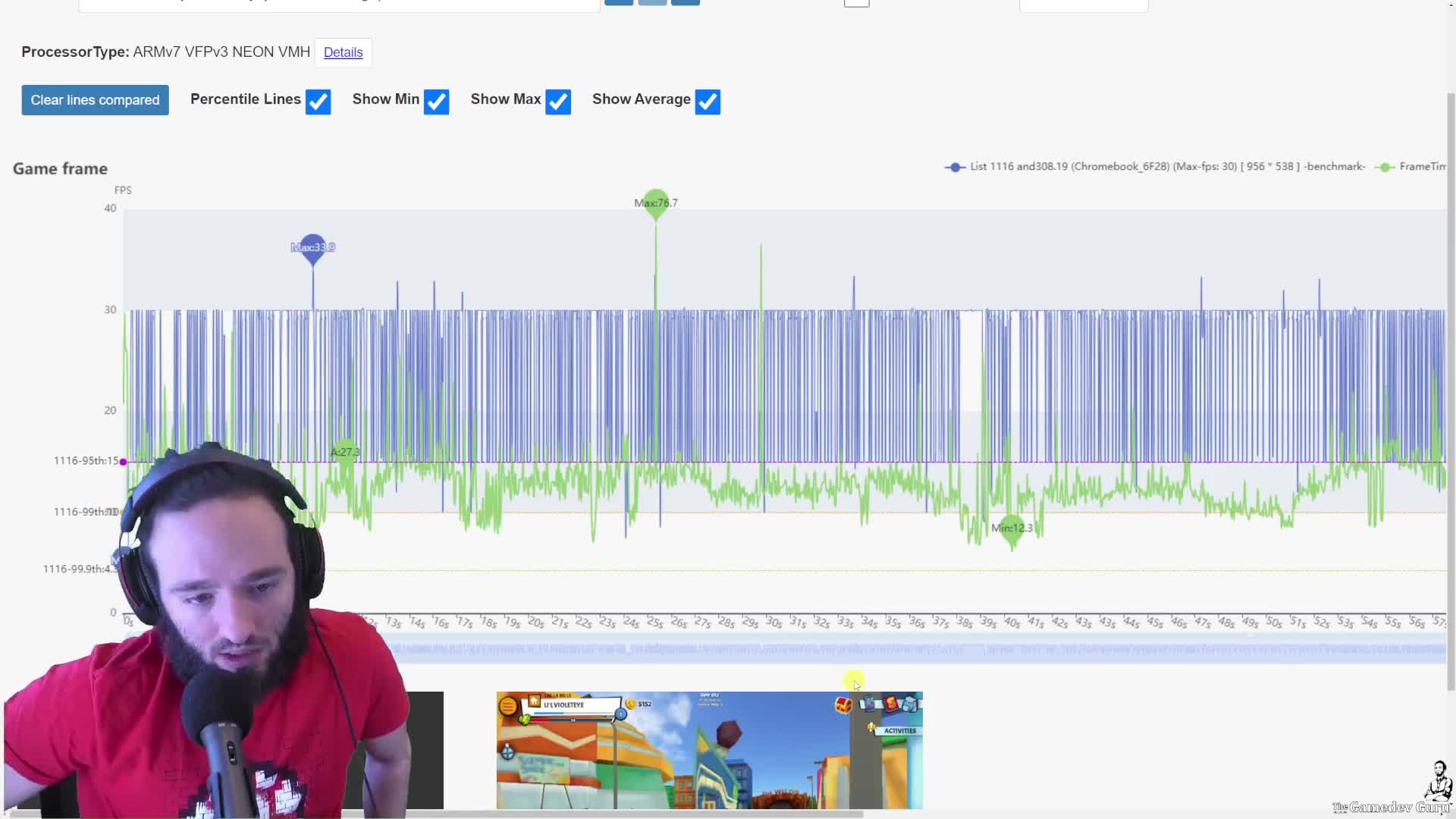

The proof is in the chart. When the scenario is deterministic, you can record frame time, FPS, quartiles, percentiles, and compare build against build on the same device. That is where optimization stops being opinion and starts becoming a real production signal.

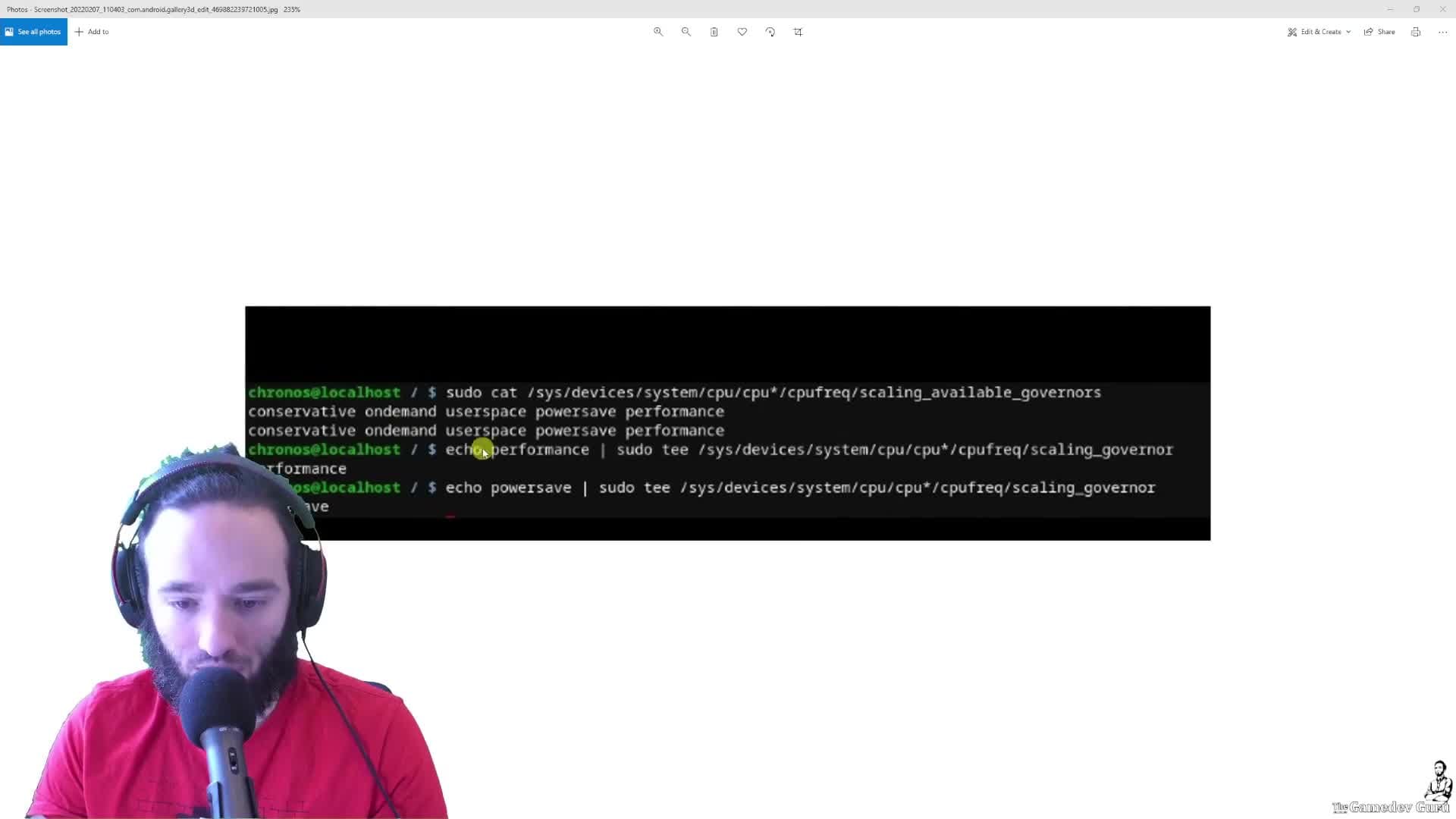

Check this too: CPU frequency noise will quietly poison your results. On laptops, phones, Chromebooks, and Android devices, the CPU can swing because of load, power state, or thermal throttling. If you want trustworthy numbers, fix the CPU behavior as much as the platform allows before you compare runs.

CEO/Producer translation: this is how you stop greenlighting or rejecting work from fake regressions. A deterministic CPS cuts review tax, shortens iteration time, and tells you whether a build is truly better or just luckier.

The members-only module is the full step-by-step playbook: how to build the scene, how to automate the runs, how to deal with CPU governors and thermal noise, and how to turn the results into charts and repeatable reports instead of profiler folklore.

In this module:

- 1. Introduction to February 2022

- 2. Main Lesson

- 3. Building an Automated Performance Testing Scenario + Bonus

- 4. Reducing Profiling Noise from Varying CPU Frequencies

Join to unlock the full module, audio, and resources.